Code-Based Workflows With Durable Functions in Azure

Azure Functions is a handy solution that allows you to focus on just the functionality your code needs to perform, and not how to package and deploy your application. You just write your functionality as functions, and deploy them to Azure. In my previous post building applications with Azure Functions I talked about a few things I’ve thought about when building function applications. Be sure to check that out too.

However, this post is about Durable Functions, which is an extension to Azure Functions. With Durable Functions, you create code-based workflows as orchestrations. An orchestration has a state that is managed by the Durable Functions runtime. You don’t have to do anything to manage the state. You also get automatic retries in case your functions throw exceptions. All without a single line of code. You just focus on the task your functions need to perform, and not the supporting infrastructure.

Be sure to also check out my Durable Functions Pitfalls article that I wrote after this article was originally created.

Sample Code

To give you an option to get your hands dirty and dig into the code, I wrote a sample application to go with this post. The code is available on GitHub, in the Code-Based Workflows with Durable Functions in Azure repository. Be sure to check that out too.

Different Types of Functions

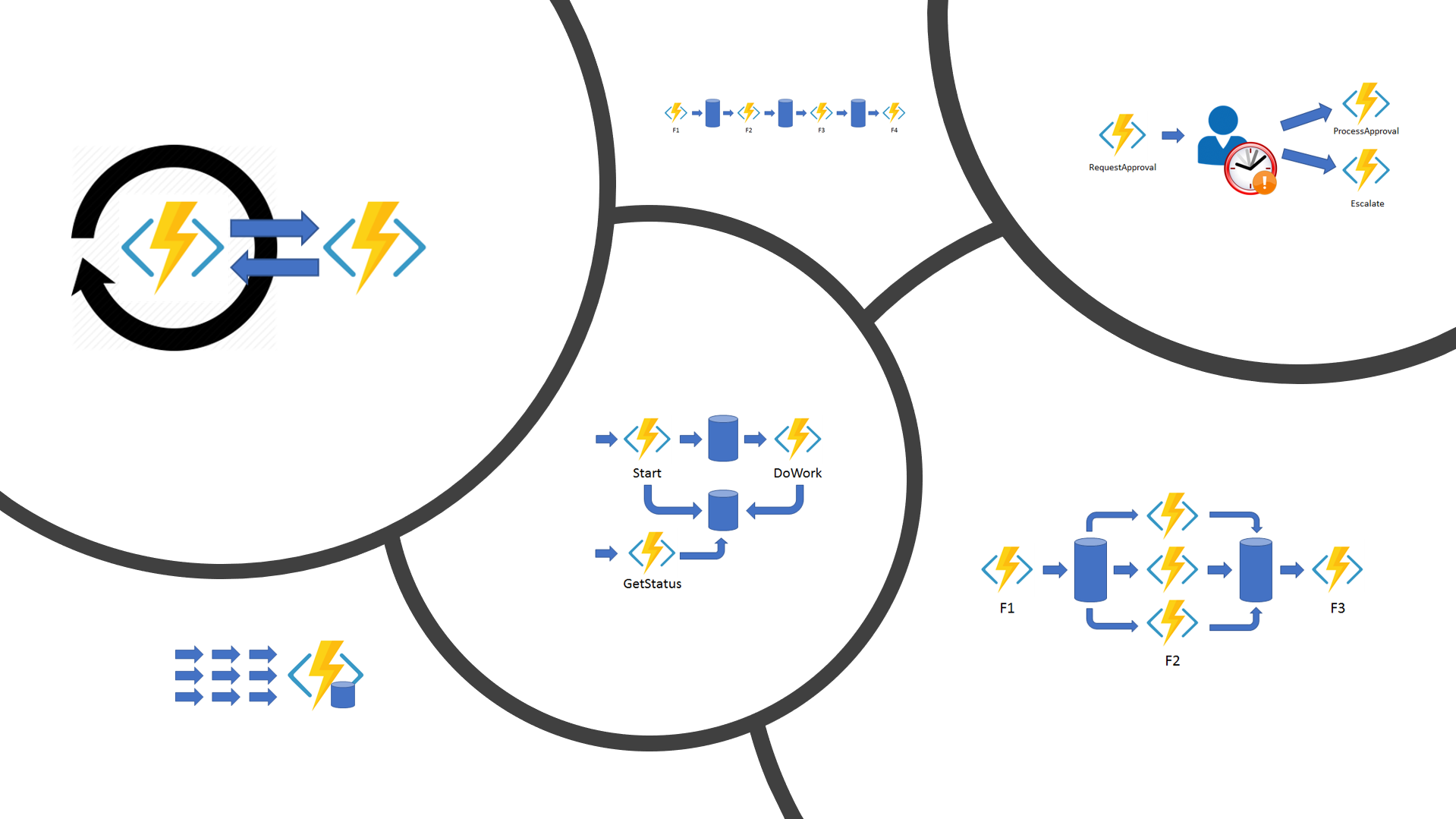

There are different types of functions involved in an orchestration. I’ve described them in more detail in the sub chapters below. In short, these types are:

In the next version of Durable Functions there will be a new type of function – Entity Function. I’ll write about Durable Functions v2 in a later post, so stay tuned.

Triggering Functions

Although technically not part of the Durable Functions stack, triggering functions are still a key part in when writing code-based workflows with Durable Functions. They are the functions that you use to start a new orchestration function with.

You can use any standard function to start a new Durable Functions orchestration. I find myself starting new orchestration functions mostly from the following types of functions.

- HTTP triggered function – Called from an external application with some sort of payload to process in the orchestration.

- Blob triggered function – To process a file landing in a blob container in a new orchestration function.

With HTTP triggered functions, there’s one very handy trick that you can use. You can return an object that defines URLs that allow the caller of the HTTP triggered function to interact with the orchestration that was started.

This is shown in the sample code for this post on GitHub, in the HttpAPIFunctions class. The response from this HTTP triggered function is a JSON document that defines URIs for various interactions with the started orchestration. You can for instance do the following.

- Query for status of the orchestration.

- Terminate the orchestration.

- Send an event to the orchestration.

There are a few others, but these I consider the most useful.

Orchestration Functions

An orchestration function is the starting point of your workflow. It is where your workflow logic mostly exists. In difference from for instance Logic Apps or Power Automate (Microsoft Flow), your workflow logic is code. Not boxes on a UI canvas.

Orchestration functions are stateful. The state contains information about which sub orchestrations and activity functions have been called in the orchestration. Sub orchestrations and activity functions are invoked using the orchestration context. Have a look at the sample code to see how you call activity functions with the context object. Also be sure to read the section about calling activity functions below.

You define an orchestration function by creating a normal function method with the FunctionName attribute. In addition, you need to specify a DurableOrchestrationContext parameter decorated with the OrchestrationTrigger attribute. Read more about orchestration functions from the documentation. Be sure to also check out the sample code for how orchestration functions are defined.

The most important thing with orchestration functions you just have to remember is, that you must not call any async methods from an orchestration function, except for sub orchestrations or activity functions, and only using the orchestration context. A sub orchestration is just a normal orchestration function that is called from another orchestration function.

If there’s only one thing you’re going to remember from this post, then let it be this thing! You cannot even imagine how many times I’ve been banging my head on the keyboard when my orchestrations have not been working. Until much later when I’ve realize that I’ve invoked a database operation or accessed a storage account directly from an orchestration function.

So remember, no async calls from orchestration functions!

Activity Functions

An activity function is a function that is called from an orchestration function. Typically, an activity function performs async tasks such as invokes a database command or calls other services or APIs with async calls.

Activity functions are stateless, so they act very similar to standard async methods. You can also call any other methods, either sync or async, from an activity function.

You define an activity function as a standard async function with the FunctionName attribute. Activity functions also need a context object, but the type is DurableActivityContext, and the context parameter must be decorated with the ActivityTrigger attribute. Read more about activity functions from the documentation. Also be sure to check out the sample code on how activity functions are defined.

Calling Activity Functions

The robustness of your orchestrations and the integrity of their states depends heavily on how you call your activity functions. This is also where the Durable Functions runtime gives you a lot of functionality for free. Sometimes your code can fail, especially if it relies on external systems that you access over a network. The systems can be down for maintenance, the network might be down, or something else.

When you call an activity function, you can specify the retry options to apply to calling the activity. The Durable Function runtime then uses these options in case the activity function fails, i.e. throws an exception that is not handled by the activity function.

It can be a bit of an overkill to specify the retry options separately for every activity function that you call. Not to mention if you later on want to change the maximum retries from 5 to 10. That’s why I created an extension method, CallActivityWithDefaultRetryAsync, that you can use in your orchestration functions, as demonstrated in the sample code.

Summary

I’ve been building solutions with the help of Durable Functions quite a lot. However, it’s not a silver bullet that will protect your solutions from all the harm in the world. But in some use-cases they are actually very useful, and will definitely simplify your code.

One very typical use-case that can be handled with Durable Functions is all kinds of background processing. Some kind of tasks where the caller does not need an immediate response, or even a response at all. These are typically triggered on a schedule, either using Azure Functions timer trigger, or an HTTP trigger that is invoked by an external scheduler or in response to user action.

It is also pretty obvious, that the Durable Functions runtime does not make your code run any faster, so if you have something that is very performance critical, then Durable Functions might not be the correct answer.

Other Workflow Technologies

So, what about other workflow technologies then, for instance Logic Apps? Well, I’d like to think that they support each other. You have probably tried Logic Apps or Microsoft Flow, and created a few workflows with them. You also then probably have noticed, that they very easily become very verbose, and your workflow designer canvas is full of activities that is very hard to get a big picture of.

That’s where you could leverage Durable Functions too. You create your business logic in Logic Apps, but they hand off stuff with more technical nature to workflows built with Durable Functions. This could make things a lot clearer in your workflow designer, when you don’t have to take care of all the nitty-gritty stuff.

One thing I’m sure of is that Durable Functions will continue to be a trusted tool in my bag of technologies I rely on when building solutions in Azure.

Have a nice day!

0 Comments